Part I: The Crisis of the Void

Chapter 1: The Syntactic Illusion and the Exiled Mind

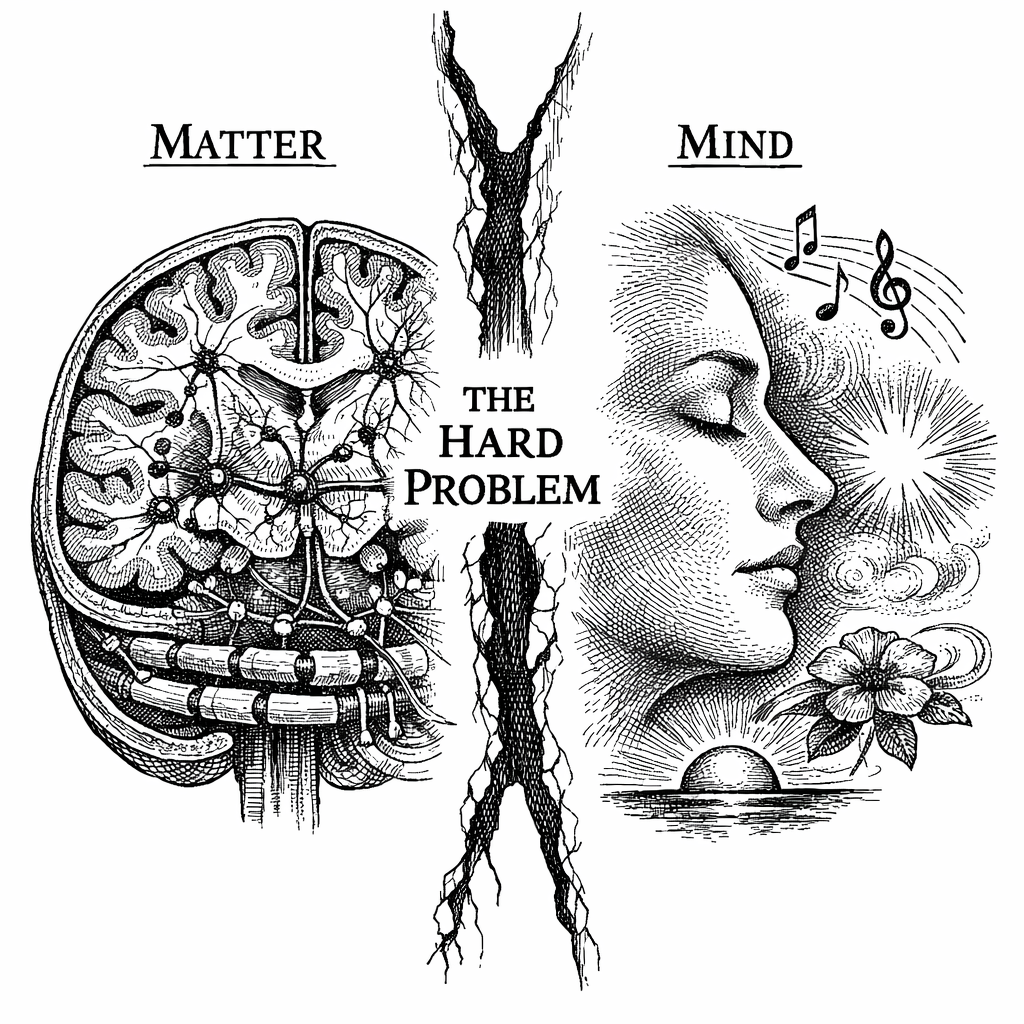

1.1 The Galilean Bargain

Look up at the night sky on a clear evening, far from the light pollution of a city. Viewed through the lens of modern astrophysics, you are not looking at a canopy of mythic figures, divine omens, or a celestial sphere rotated by primeval gods. You are observing a clockwork of mathematical syntax. You are watching thermonuclear fusion dictated by , the curvature of spacetime mapped by Albert Einstein's field equations, and the accelerating expansion of the void driven by dark energy.

For four hundred years, the scientific method has achieved extraordinary predictive power over the physical world. It split the atom, eradicated biological plagues, mapped the human genome, and placed boot prints in the lunar regolith. But this victory was purchased at a cost. It was achieved through a pragmatic compromise forged in the 17th century---a philosophical partition we might call the Galilean Bargain.

In 1623, the astronomer Galileo Galilei published The Assayer. Written as a polemic against a rival mathematician regarding the nature of comets, the text contained a philosophical maneuver that laid the groundwork for modern physics.

Galileo realized that to understand the mechanics of the cosmos, natural philosophy had to abstract away the subjective nature of human perception. He proposed that the Book of Nature is written in the language of mathematics. To read it clearly, we must separate the properties of reality into two distinct categories.

The first category he called primary qualities. These are the objective, measurable, geometric features of the world: mass, size, shape, position, number, and motion. These exist independently of an observer. If humanity went extinct tomorrow, a mountain would still possess mass, a river would still possess kinetic energy, and the Earth would still possess orbital angular momentum. They are the physical properties of the universe, existing out there in the dark, silent void.

The second category he called secondary qualities. These are the subjective experiences generated by our senses: the redness of a rose, the sting of a burn, the taste of honey, the sensation of falling, the smell of rain, the resonance of a minor chord.

Galileo argued that these secondary qualities do not exist in the external physical world. They are phenomena generated within the living body. A rose is not inherently red; it reflects electromagnetic radiation at a specific wavelength. A feather does not possess the physical property of a tickle. "Redness" and "tickles" belong entirely to the observer.

"I think that tastes, odors, colors, and so on are no more than mere names so far as the object in which we place them is concerned. They reside only in the consciousness. Hence if the living creature were removed, all these qualities would be wiped away and annihilated." [1]

With this epistemological division, Galileo---and soon after, Rene Descartes---excised the observer from the universe. They claimed the primary qualities for science and exiled the secondary qualities---the felt experience of being alive---to philosophy, theology, and art. Physics became the study of the stage. The observer was escorted out of the theater.

1.2 The Universe of Pure Syntax

This division was highly pragmatic. By setting aside the mathematically intractable subjectivity of human experience, physicists built a mechanical model of the cosmos. Isaac Newton used it to map the orbits of the planets and the parabolic arc of a cannonball. James Clerk Maxwell used it to unify electricity and magnetism into electromagnetic waves. Einstein used it to formalize the geometry of space and time.

But amid this technological success, an error of memory occurred: we forgot that we had removed the observer in the first place. By defining science as the study of a universe devoid of subjective experience, we wrote ourselves out of the script. We became phantoms haunting a clockwork cosmos. We built a precise map of reality, only to discover that the mapmakers were nowhere to be found on it.

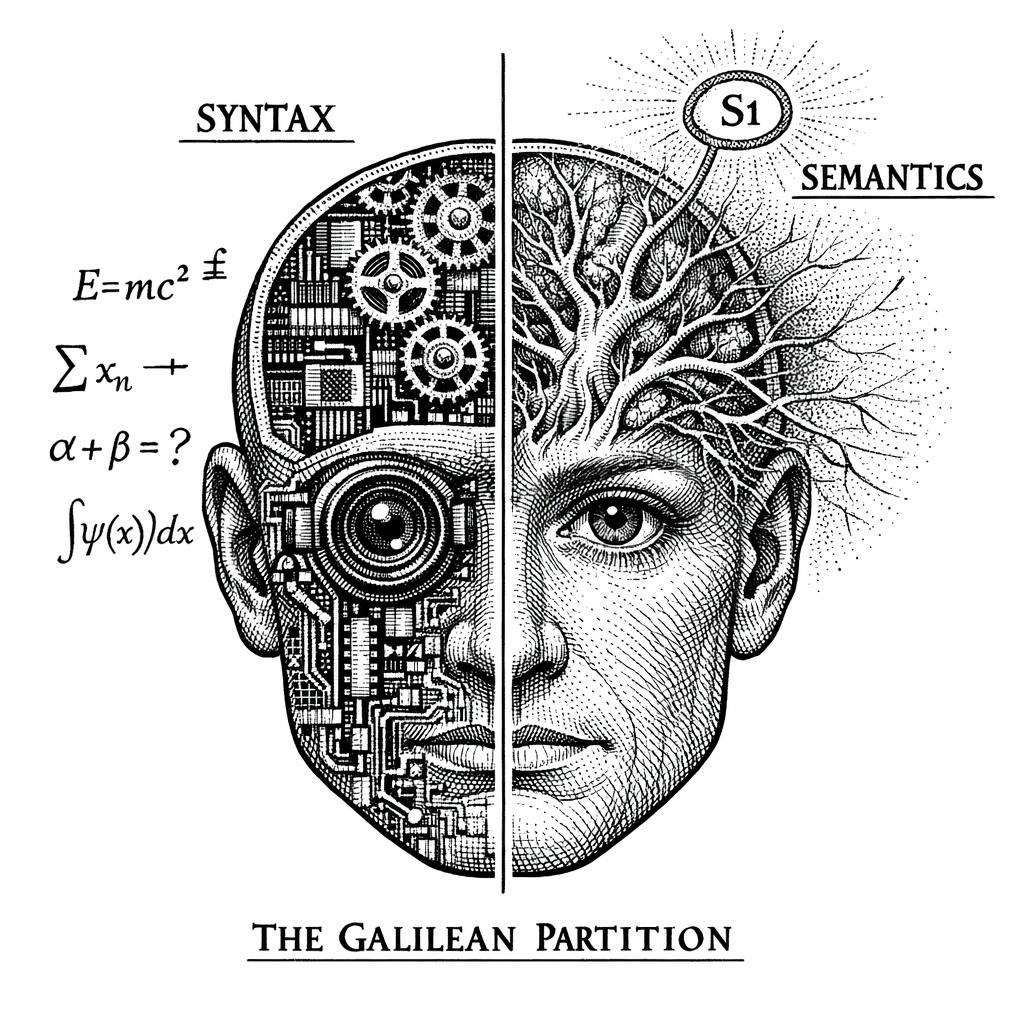

The result of the Galilean Bargain is the Syntactic Universe.

In logic and computer science, syntax refers to the rules, structure, and behavior of symbols. Semantics refers to the internal meaning and experiential understanding of those symbols.

A computer operates entirely on syntax. A processor shuffles electrical voltages through logic gates according to algorithmic rules. It can calculate the trajectory of a moon orbiting Jupiter or render a sunset in a video game. But the processor itself experiences nothing. It possesses no internal understanding of the moon or the sunset. It does not feel the warmth of the digital sun it renders, nor does it feel a sense of accomplishment when it wins a game of chess. It is a zombie mechanism, executing complex behaviors in total internal darkness.

For four centuries, classical physics has treated the universe as a syntactic processor. The Standard Model of particle physics describes the behavior of quarks, leptons, and gauge bosons. It details how they spin, attract, and repel. It is a comprehensive spreadsheet of interactions.

But behavior is all it describes.

Consider the experience of seeing the color red. Physics, chemistry, and biology provide a syntactic description of this event: a fusion reaction inside a star emits a photon with a wavelength of exactly 700 nanometers. This photon travels across 93 million miles of vacuum, enters Earth's atmosphere, strikes an apple, reflects into the human eye, passes through the cornea, and triggers a conformational change in a photoreceptor protein called rhodopsin. This shape-shifting cascades into an electrical action potential, propagating down the optic nerve, ultimately flooding the V4 region of the visual cortex with sodium and potassium ions.

This chain of events is mathematically precise. Yet, within that sequence of moving atoms, cascading electricity, and neurotransmitter release, there is no mathematical variable for what it feels like to experience the qualitative sensation of "redness."

You can search the equations of quantum electrodynamics, and you will not find a variable for interiority. You will not find a mathematical operator for joy, sorrow, or the taste of salt. The inner, qualitative fire of existence---the Semantics of reality---was erased from the chalkboard four hundred years ago.

In 1988, theoretical physicist Stephen Hawking asked a question that exposed this omission:

"Even if there is only one possible unified theory, it is just a set of rules and equations. What is it that breathes fire into the equations and makes a universe for them to describe? The usual approach of science of constructing a mathematical model cannot answer the questions of why there should be a universe for the model to describe." [2]

For centuries, physicists operated under the assumption that mapping every primary quality---every atom, force, and trajectory---would eventually explain the secondary qualities. The expectation was that subjective experience would emerge from the mechanics. The syntax would eventually explain the semantics.

This expectation has not been met.

Today, as physics pushes against the microscopic limits of reality, and neuroscience maps the firing of individual synapses, the exiled ghost of the observer has returned. Science is confronted by two seemingly unrelated paradoxes at the frontier of human knowledge. Together, they threaten to tear the entire Galilean paradigm apart.

1.3 The First Abyss: The Miracle of the Meat Machine

The first paradox sits directly behind your eyes. In 1995, philosopher and cognitive scientist David Chalmers formalized a dilemma that had long accompanied the study of the brain. He called it the Hard Problem of Consciousness [3].

To understand the Hard Problem, we must contrast it with what Chalmers termed the "Easy Problems" of the brain. Neuroscience has been highly successful at mapping the mechanics of cognition. A neurobiologist can trace visual threat data from the optic nerve to the amygdala, map the release of corticotropin-releasing hormone, track the activation of the sympathetic nervous system, and measure the subsequent spike in heart rate. They can map how the hippocampus encodes spatial memories, enabling a rat to navigate a maze. They can track how the motor cortex coordinates the movement of a pianist's fingers.

These are the Easy Problems. They are "easy" not because they lack complexity---they involve massive biological networks requiring supercomputers to model---but because they are mechanical. They are problems of syntax. They describe how information is routed and how physical inputs reliably produce physical outputs.

The Hard Problem asks an ontologically different question: Why does all this biological plumbing feel like something from the inside?

When you bite into a lemon, why doesn't your brain simply process the chemical acidity in the dark, the way a computer processes a line of code? Why is the mechanical processing of citric acid accompanied by a private, subjective, mouth-puckering explosion of conscious experience?

Look closely at the chemistry of the brain. Zoom in on a synapse. You will see vesicles releasing glutamate into a synaptic cleft. You will see lipid bilayer membranes and voltage-gated ion channels opening and closing. You will see proteins folding.

But you will not find the color red. You will not find the feeling of grief, the smell of wet pavement, or the taste of coffee. You can observe a neuron indefinitely without finding an experience. There is no mathematical variable in the equations of biochemistry for "what it feels like."

Philosopher Frank Jackson illustrated this gap with a 1982 thought experiment known as "Mary's Room" [4].

Imagine a neuroscientist named Mary who is raised in a black-and-white room. She has never seen color; her monitors are grayscale, her books are printed in black ink. Through her studies, she learns every physical, chemical, and neurological fact about color vision. She possesses complete syntactic knowledge. She knows the electromagnetic wavelength of red light, the molecular structure of the human retina, and the algorithmic firing pattern of the visual cortex when a person looks at a tomato. She knows all the physical facts.

One day, the door to her room opens, and Mary walks outside. She looks up and sees a blue sky and a red apple.

Does Mary learn something new?

The intuitive answer is yes. She learns what it feels like to see red and blue. She discovers the qualitative, subjective experience---what philosophers call qualia.

If Mary learns something new upon leaving the room, it highlights a limitation in our scientific models: the physical facts are not all the facts. One can possess a comprehensive knowledge of physics and chemistry and still lack knowledge of the semantics of reality. You cannot deduce the feeling of sorrow, the taste of a lemon, or the sensation of a stubbed toe from the mass, charge, and velocity of an atom. They represent fundamentally different categories of existence.

Under the objective laws of classical physics and chemistry, biological functions do not strictly require subjective experience. Organisms could theoretically operate as "philosophical zombies"---systems capable of navigating environments, solving problems, and reproducing, but devoid of internal experience. If the movement of ions in a lithium battery does not generate consciousness, it remains unclear why the movement of ions in a skull creates the private, technicolor movie of your life.

Leading neuroscientific frameworks, such as Integrated Information Theory (IIT) and Global Workspace Theory (GWT), offer compelling models of how the brain operates. They demonstrate that conscious states correlate with highly complex, integrated information globally broadcast across cortical regions. While these theories successfully identify the physical correlates of consciousness, they leave the core ontological question unanswered. They map what the brain is doing while it is conscious, but they remain mathematically silent on how syntax miraculously transforms into semantics.

We are trapped in the first abyss: Neuroscience possesses an observer, but no fundamental equations to explain its existence.

1.4 The Second Abyss: The Quantum Catastrophe

While neurobiology investigates how matter creates an observer, theoretical physics faces a complementary anomaly: the physical universe refuses to exist in a definite state without an observer.

This is the Quantum Measurement Problem, a central unresolved issue in quantum mechanics.

In the classical, macroscopic world of Isaac Newton, reality is objective and deterministic. A tennis ball exists in a specific place, traveling at a specific speed, whether it is observed or not. The moon is there even when you close your eyes.

But in the 1920s, the architectural titans of physics---Niels Bohr, Werner Heisenberg, and Erwin Schrodinger---probing the subatomic realm, discovered that the microscopic universe behaves differently.

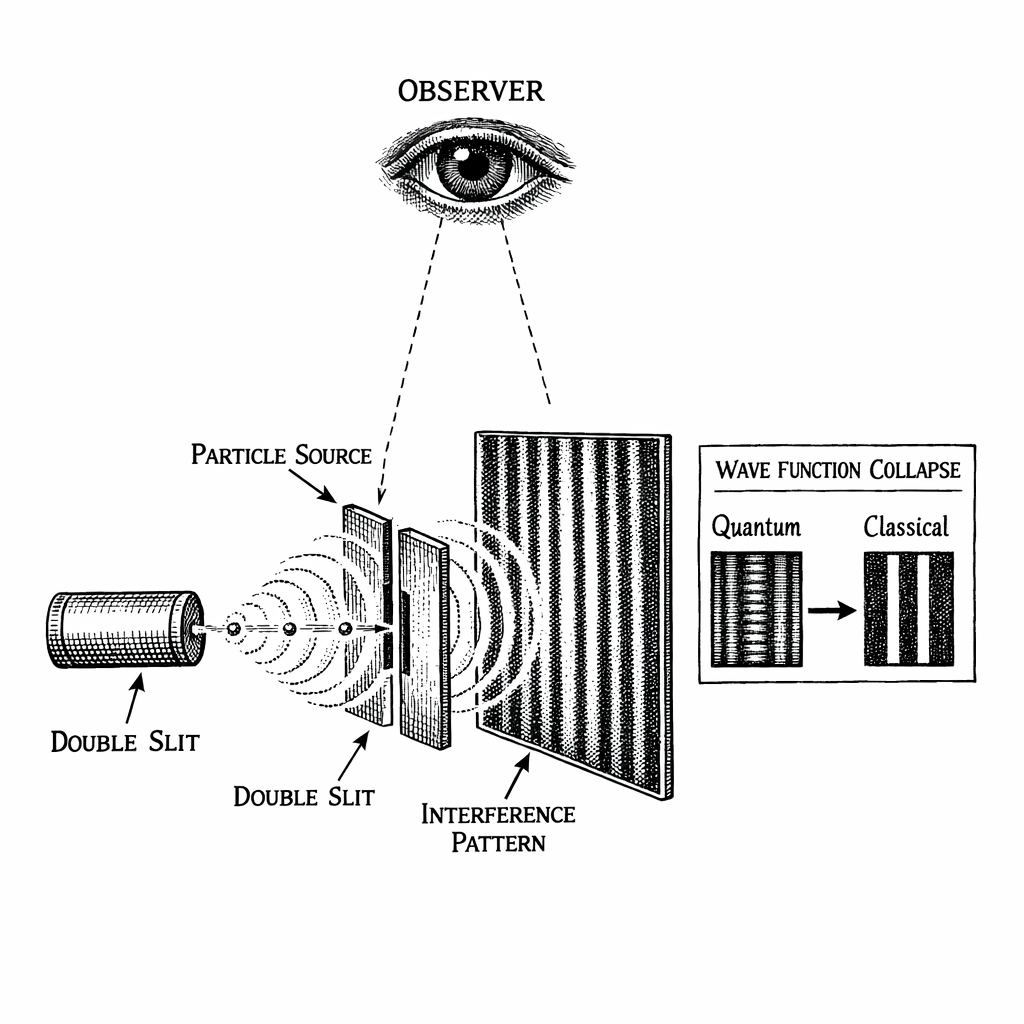

The foundational equation of quantum mechanics is the Schrodinger equation. It models particles not as solid billiard balls, but as smeared-out waves of probability. Before measurement, an electron does not have a definite position. It exists in a superposition---a mathematical ghost occupying all its potential positions simultaneously. The Schrodinger equation evolves this probability wave forward in time deterministically. Physicists call this the U-process (Unitary evolution).

But upon measurement, this behavior changes.

The moment an observation is made, the wave function collapses. The superposition resolves into a definite state, and the electron is found in one specific location. This is called the R-process (Reduction of the state vector). It is discontinuous, non-deterministic, and irreversible.

This phenomenon is demonstrated in the Double-Slit Experiment. If you fire individual particles at a barrier with two slits while unobserved, they act as waves of probability. They pass through both slits simultaneously, interfering with themselves to create an interference pattern on the detector screen.

But here is where the Galilean divide collapses: The moment a measuring device is placed at the slits to observe which path the electron takes, the wave function collapses. The interference pattern vanishes. The probabilities resolve into a single, definite point. The electron behaves like a localized particle, passing through only one slit.

The physical behavior of the system changes depending on whether it is observed. It remains in a state of statistical potential until a measurement occurs.

For a century, physics has developed various interpretations to explain this measurement problem, often seeking to avoid assigning a special role to human consciousness.

Mathematician John von Neumann formalized the implications of this through the "von Neumann Chain" [5]. If an electron is in a superposition and a mechanical camera interacts with it, the camera does not collapse the wave function. According to the linear mathematics of quantum mechanics, the camera becomes entangled with the electron. The camera enters a superposition. If a computer reads the camera, the computer enters a superposition. If a physicist looks at the computer screen, their eyes, optic nerve, and visual cortex---composed of the same atoms as the electron---should mathematically enter a state of superposition as well.

The mathematics suggest that the superposition propagates outward, encompassing everything it interacts with. The physical universe, governed by these linear equations, cannot collapse itself.

Yet, collapse occurs. We do not experience macroscopic superpositions; we experience a definite reality. Where does the chain stop? Where does reality actually become solid?

In 1961, Nobel laureate Eugene Wigner took von Neumann's mathematics to their logical conclusion. Wigner argued that a physical system cannot measure itself. To break the chain of quantum entanglement, the system must encounter something outside the purely physical laws of linear evolution.

"It was not possible to formulate the laws of quantum mechanics in a fully consistent way without reference to the consciousness. The very study of the external world led to the conclusion that the content of the consciousness is an ultimate reality." [6]

Many physicists sought alternatives to Wigner's conclusion. To avoid giving consciousness a fundamental role in physics, Hugh Everett proposed the Many-Worlds Interpretation. Everett argued that the wave function never collapses. Instead, every possible outcome of a quantum event is realized in a branching multiverse. An observer sees the electron go left in one branch, while a duplicate observer sees it go right in another.

Think about the ontological commitment this requires. Mainstream, highly rational scientists are willing to propose the creation of infinite, undetectable parallel universes, generating trillions of new universes every millisecond, simply to avoid admitting that consciousness plays a foundational role in physics. The Many-Worlds Interpretation is mathematically elegant, but philosophically gluttonous.

We are trapped in the second abyss: Physics has equations, but they explicitly require an observer that physics cannot define.

1.5 The Category Error of "Emergence"

Let us take a step back and look at the geometry of our ignorance.

Science frequently treats the Hard Problem of Consciousness and the Quantum Measurement Problem as separate issues. Neurobiologists attend conferences on the brain; quantum physicists attend conferences on particle fields. Rarely do they speak the same language.

But look at the symmetry of their mysteries:

The Hard Problem asks: How does physical matter create a non-physical observer?

The Measurement Problem asks: How does a non-physical observer collapse physical matter?

The framework of this book proposes a paradigm shift: These are not two different problems. They are the same geometric phenomenon viewed from two different dimensional perspectives.

They are the lock and the key. We have been trying to solve a jigsaw puzzle by insisting that the two most important pieces belong to different boxes.

To bridge this chasm, we must first address a common assumption in modern cognitive science: the doctrine of Strong Emergence.

When explaining consciousness, physicalist models often propose that subjective experience is an emergent property of complex computation. They suggest that if enough syntactic processors are networked together, subjective experience arises, just as the wetness of water emerges from hydrogen and oxygen atoms.

Logically, this represents a category error.

In physics, weak emergence is well understood. The wetness of water is a macroscopic description of the electromagnetic bonds between molecules. Temperature is a macroscopic description of the kinetic energy of vibrating atoms. Traffic jams emerge from the collective braking of individual cars. In every case of emergence in physics, complex quantitative behavior is derived from simpler quantitative parts. It is a movement from syntax to syntax.

Consciousness presents an ontological rupture. You cannot derive semantics from syntax. You cannot deduce a qualitative feeling from the spatial arrangement of sodium ions. Proposing strong emergence is akin to suggesting that if you arrange enough inanimate metal gears into a complex clock, the clock will eventually feel anxious about being late.

Whether you have ten neurons or ten trillion neurons, you still just have moving meat.

If you build a supercomputer simulation of a hurricane, mapping every atom of wind and rain in code, the computer does not get wet. The simulation does not blow away the laboratory. Simulating physical syntax does not generate physical reality. Similarly, simulating the neural firing of a brain maps the syntax but does not generate a subjective experience. It generates a complex dataset.

Furthermore, evolutionary biology operates on energy efficiency. The human brain accounts for 2% of the body's weight but consumes 20% of its caloric energy. If consciousness is merely an epiphenomenon---a passive byproduct that observes the body act but possesses no causal power to influence physical behavior---natural selection would have ruthlessly bred it out of us millions of years ago. A philosophical zombie would require fewer calories to survive than a conscious human.

To survive the evolutionary filter, consciousness must possess causal power. It must be capable of physically moving matter. It must be doing something that classical biology cannot do on its own.

If consciousness does not simply emerge from complex syntax, and if it possesses the causal power to collapse wave functions, we must pursue a new geometric architecture. We must apply the same logic to consciousness that Albert Einstein applied to gravity.

1.6 The Geometric Leap: Introducing the Semantic Dimension ()

Before 1915, the mechanism of gravity was a mystery. Isaac Newton had written the equations for it, but he did not know what it was. It appeared as an instantaneous force pulling planets through an empty void---an "action at a distance" that Newton himself questioned.

Einstein resolved this by proposing an ontological shift: Gravity was not a force operating in space. It was the geometry of spacetime itself. A bowling ball on a trampoline curves the fabric around it, and smaller objects simply roll into the depression. There was no invisible tether; there was only geometry. Space was not an empty stage; it was an active participant.

We must apply a similar conceptual leap to the mind.

Consciousness is not a byproduct generated by the chemical engine of the brain. The brain no more "secretes" consciousness than a television set secretes the broadcast signal. The framework proposes that consciousness is a fundamental, structured geometric dimension of reality. Just as the universe possesses mass, charge, and spacetime curvature, it possesses the fundamental geometric property of interiority.

How can consciousness be modeled as a geometric dimension? How do we integrate the mind into the fabric of the universe without resorting to panpsychism (the assumption that fundamental particles possess awareness)?

We utilize a standard tool in theoretical physics for unifying disparate phenomena: we introduce an extra dimension.

In 1921, mathematician Theodor Kaluza sent a paper to Albert Einstein. Kaluza had taken Einstein's equations of General Relativity, which operate in 4 dimensions (three of space, one of time), and formulated them in 5 dimensions.

In 5 dimensions, the equations separated into two parts: one described Einstein's gravity, and the other described James Clerk Maxwell's electromagnetism. By adding a spatial dimension, Kaluza unified gravity and light into a single geometric framework. Gravity and light were the same thing, rippling in different dimensions.

But why was this 5th dimension unobservable? In 1926, physicist Oskar Klein provided an answer: compactification.

Klein proposed that while the three dimensions of space are extended, the 5th dimension is curled up onto itself into a microscopic circle (mathematically denoted as an topology).

Think of a garden hose stretched across a lawn. From a distance, the hose appears as a 1-dimensional line. You can only define a position along its length. But viewed closely, an ant walking on the hose can move forward and backward, and it can also walk around the circular circumference. That circular direction represents a compactified dimension. It exists at every point in space, but is curled up so tightly (near the Planck scale of meters) that macroscopic objects cannot move through it.

Today, String Theory and M-Theory rely on Kaluza-Klein compactification, proposing up to 11 dimensions to resolve fundamental equations. Physics already utilizes hidden dimensions woven into the fabric of the cosmos.

The framework proposes an evolution of this mathematical architecture: Dimensional Field Theory (DFT).

In the DFT framework, spacetime is not simply the 4-dimensional manifold we perceive. It possesses an additional compactified dimension, the circle.

However, this is not a spatial dimension governing the charge of an electron or the vibration of a string. It is the Semantic Dimension. It serves as the topological axis of subjective awareness, meaning, and inner experience.

Consciousness is not a spatial location; it is the geometric axis of interiority. You do not move through it with your physical body. Your awareness occupies mathematical coordinates within it. The physical 3D universe measured by physical instruments is a projection of a higher-dimensional unified field.

When your mind shifts its focus---when you actively pay attention to a thought or a visual stimulus---you are not just undergoing a chemical reaction in your prefrontal cortex. You are executing a mathematical translation along the axis. Your observer wave function is traversing the topology of the Semantic Dimension.

This geometry provides a bridge across the abyss. The physical universe is a wave function of syntactic possibilities requiring a mechanism to select a definite reality. As we will rigorously prove in the coming chapters, the framework utilizes information theory. The act of "paying attention" is not passive observation. Focused attention decreases the informational entropy of your mental state, generating a quantifiable thermodynamic force---a Fisher Information gradient.

This thermodynamic gradient crosses from the Semantic Dimension into 3D space. Because the universe abhors an extreme thermodynamic gradient, it is forced to balance the cosmic ledger. It collapses the probabilities of the quantum wave function into a singular, definite physical history.

Hawking asked what breathes fire into the equations. The answer is that the equations are incomplete. The fire is the geometry of the observer. Your conscious attention is the geometric anvil upon which the probabilities of the universe are hammered into physical fact.

1.7 The Biological Cliff: The Thermal Storm

We have outlined a framework where mind and matter are united by geometry. But a mathematical theory of consciousness must survive the realities of biology.

If consciousness requires a quantum geometric property---a thermodynamic force bridging dimensions---how does it survive the boiling, wet, 300-Kelvin environment of the human brain?

Standard physics dictates that such quantum states should decohere in the thermal noise of the body in a fraction of a picosecond. If the framework claims the brain is interacting with the dimension, the laws of thermodynamics demand to know how.

To cross this gap, we must leave the realm of theoretical physics and plunge into quantum biology. We must look beyond classical neuroscience to discover how evolutionary biology engineered an atomic antenna capable of surviving the thermal storm.

References --- Chapter 1

[1] Galilei, G. (1623). The Assayer. (Trans. Stillman Drake, 1957). Discoveries and Opinions of Galileo. Doubleday.

[2] Hawking, S. (1988). A Brief History of Time. Bantam Books.

[3] Chalmers, D. J. (1995). Facing Up to the Problem of Consciousness. Journal of Consciousness Studies, 2(3), 200-219.

[4] Jackson, F. (1982). Epiphenomenal Qualia. Philosophical Quarterly, 32(127), 127-136.

[5] von Neumann, J. (1932). Mathematical Foundations of Quantum Mechanics. Princeton University Press.

[6] Wigner, E. P. (1961). Remarks on the Mind-Body Question. In I. J. Good (Ed.), The Scientist Speculates (pp. 284-302). Heinemann.

Discussion (0)

No comments yet. Start the conversation!